We built caching into Stagehand. Here's how it works

Web automation is repetitive. Most web automation runs the same actions thousands of times, and pays full price every time. We recently shipped caching in Stagehand. Here's how it works under the hood, why we built it, and where it breaks.

Browser agents are powerful, but they are often paying LLM costs over and over again for actions that are effectively identical. Every innovation in agent performance has leaned towards making agents more “human”: better context management, memory, tools, and more. But in many web automation workloads, the winning move is the opposite: avoid reasoning entirely.

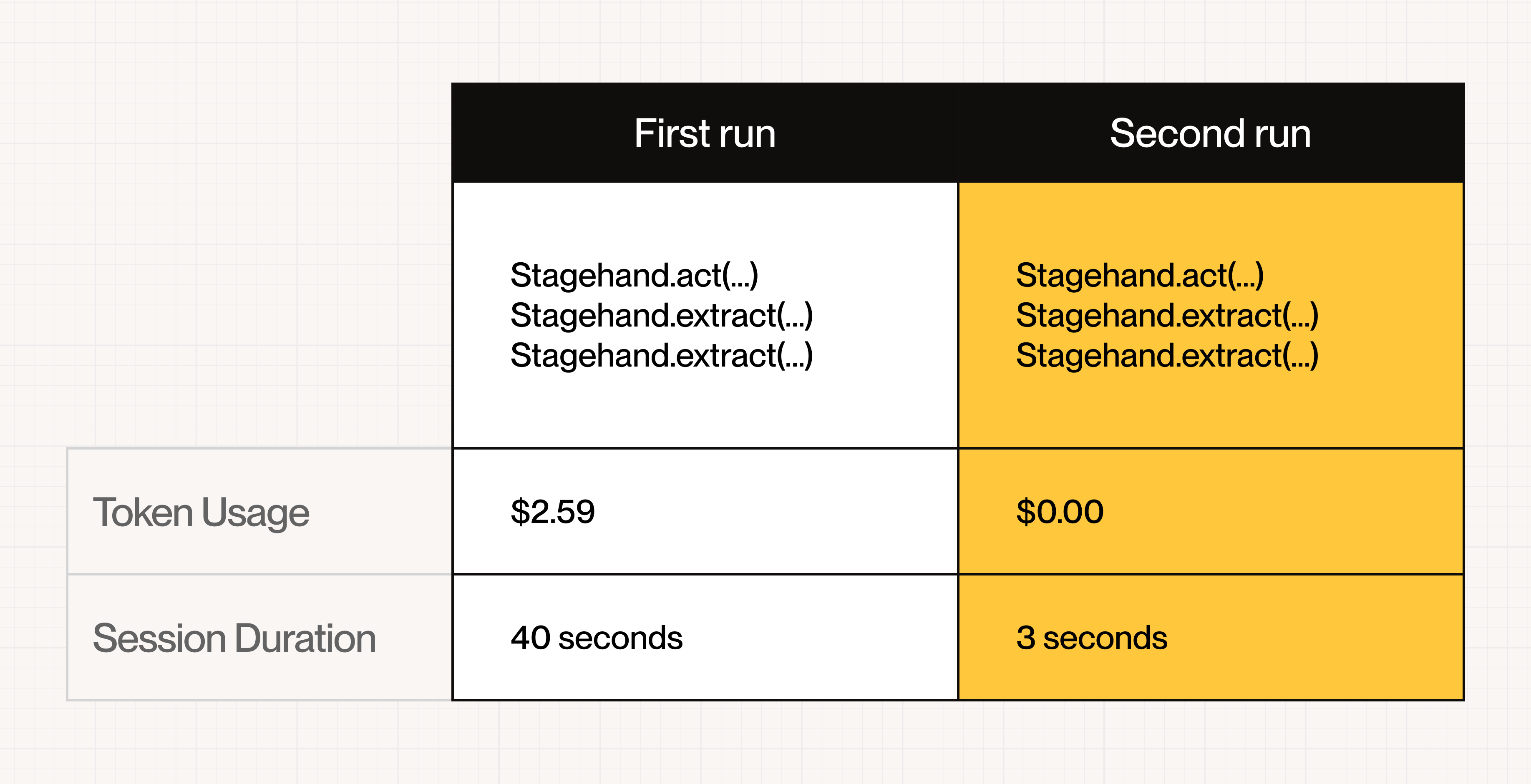

Stagehand’s Caching does this by caching the resolved selector for an action (not the whole agent), then validating the page still matches with high confidence before executing. In repetitive workloads, this can translate into performance improvements as high as ~80%, measured as % speedup across two sequential runs: the first run inserts into the cache, and the second run reads from cache and executes without an LLM call.

Key idea: the LLM is used to make an action once. After that, the system tries to reference past work and just execute.

Copy linkWhat makes web automation repetitive

In practice, most high-volume web automation doesn’t look like a robot exploring the open internet.

It’s actually quite boring. Most production workloads repeat the same few flows: the same form filled thousands of times, the same buttons clicked across a fixed funnel, and the same fields extracted from the same page templates.

That repetition becomes expensive when each run starts from “first principles” and asks an LLM to interpret the UI again, and again, and again. You pay for it in latency (the round-trip to the model), cost (tokens), and non-determinism (the model doesn’t always make identical decisions even when parameters don’t change).

Caching is an attempt to collapse that repeated work into a reusable artifact.

Copy linkWhat “Caching” means (precisely)

Stagehand performs an atomic action (extracting a field, clicking a button, filling an input). If that run succeeds, we store a cache entry on Browserbase servers that includes the selector (and supporting metadata) needed to perform the same action again.

On a future request, Stagehand tries to match the request and current page to an existing cache entry. If it matches, the SDK executes using the cached selector without calling an LLM. If it does not match, it is a cache miss, and Stagehand executes normally and then writes a new cache entry.

What we cache: selectors, prompts, action configuration. What we do not cache: Passwords, API keys, or any other sensitive information.

A cache hit happens when a future request to the Stagehand API can reproduce the same cache key for the action. At a high level, that means two things are stable: the request inputs (action configuration and parameters) are equivalent, and the page snapshot is equivalent enough that it produces the same snapshot fingerprint.

This framing matters because a lot of the work is done for us by the DOM snapshot. Changes in the page text, images on the page, structure of the elements, state, etc. all contribute to a unique representation of the DOM - which then changes the hash we use as a key for the cache.

Copy linkThe fast path: what happens when you make an action

When Stagehand receives a request to make an action, it first checks a server-side log of previous actions for the same Project ID.

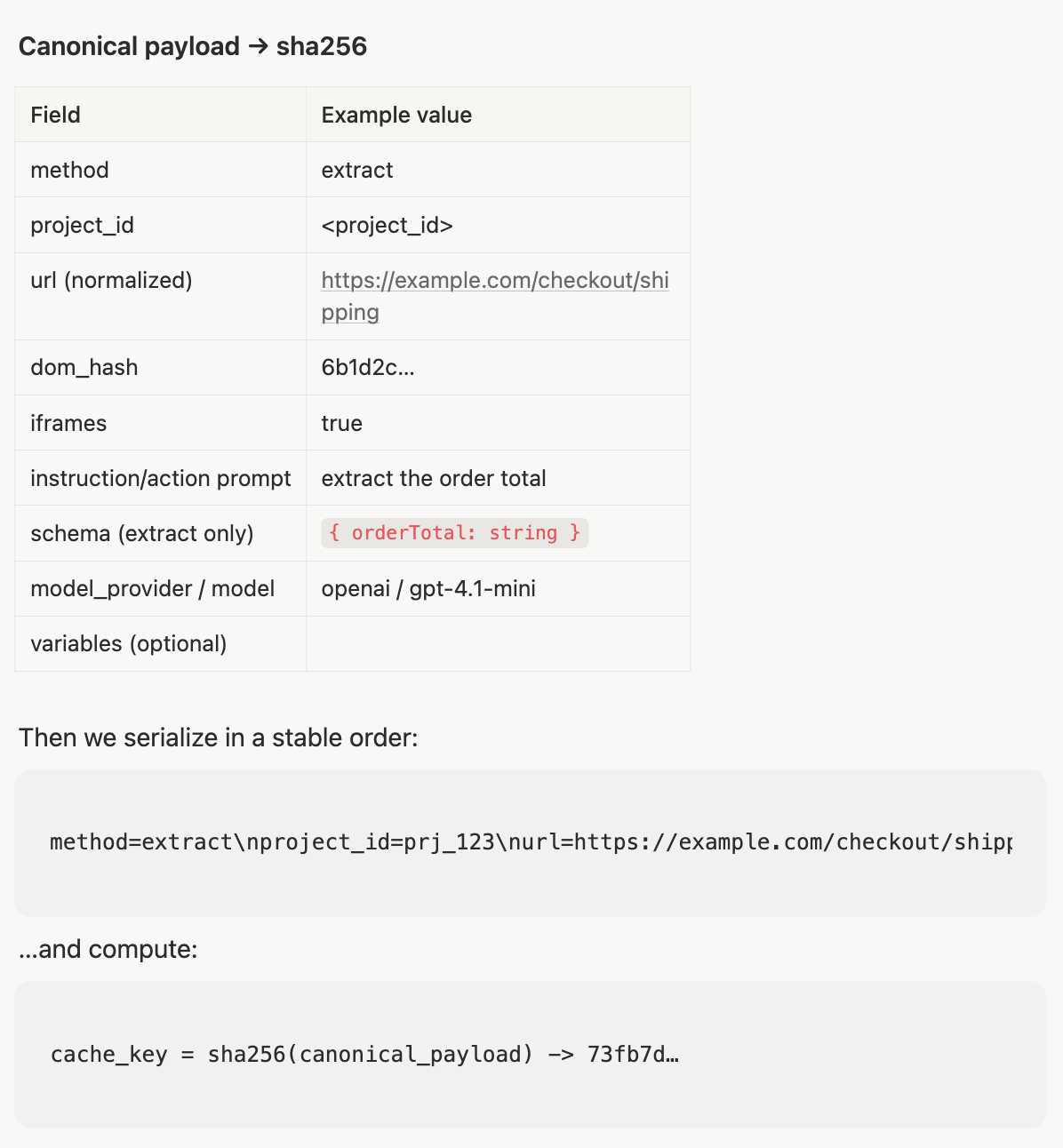

Under the hood, we hash the full set of inputs into a fixed-length sha256 digest. This keeps lookups fast and consistent even though the underlying inputs are structured and verbose.

What that means in practice is: we take the structured cache-key components (method, normalized URL, DOM hash, project scope, plus a few method-specific fields), serialize them into a canonical string, then sha256 that string.

We only include fields that change the semantics of the action. Optional nested objects (like schema or model config) get normalized and hashed (for example, schema_sha256) so the final key stays compact.

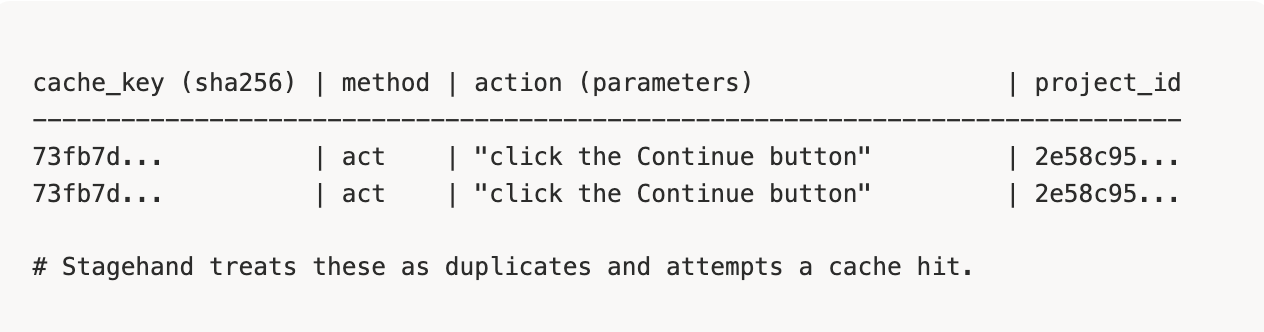

Example: two duplicate action requests (same cache key):

On a cache hit, the goal is simple: avoid the LLM and execute deterministically.

Concretely, the request is normalized (URL + action config + variables), hashed, and used to query for candidate entries scoped to the Project ID. For each candidate, Stagehand passively compares the current page snapshot fingerprint against what the entry was recorded against. If that comparison clears a safety threshold, Stagehand reuses the stored selector and executes the action directly via the SDK.

The core challenge in validation isn't detecting change, it's deciding what change matters. A page can shift in dozens of ways between runs. The question is whether the selector you cached still points at the right thing, with high enough confidence to skip the model entirely.

This is where the speedups come from. You still have browser execution time, but you skip model time and model cost.

If this passive check detects drift, we do not try to “force” the cache entry to apply.

Instead:

- The request becomes a cache miss.

- Stagehand executes the action normally.

- Stagehand inserts a new cache entry based on the new state of the page.

This bias towards safety is important. At the beginning, it’s important to optimize for accuracy over hit rates. A wrong cached click is worse than a slow click.

Copy linkVariables: the unlock for high reuse

One of the most common repetitive workflows is filling out structured forms. Job applications are a perfect example: the shape of the form is the same, and the interaction sequence is the same, but the values change per person. With variables, the same selector can be cached across multiple runs that differ only in the value being typed.

Example:

// Cannot be re used for different values await stagehand.act({ action: `type ${profile.email} into the email input`, }); // Cache HIT await stagehand.act({ action: "type %email% into the email input", variables: { email: profile.email, }, });

The important constraint is that the page must still validate as “the same enough” page for the selector to be safe.

Copy linkWhere caching doesn’t work (and why)

Caching is not magic. Some pages simply don’t repeat in a way that’s safe or useful.

In practice, caching breaks down on pages that do not repeat cleanly. Sites that render dramatically differently on every load tend to miss. URLs that are randomized or overly parameterized can defeat normalization and miss. And some pages change in ways that are meaningful even when the DOM does not change in an obvious way, which is one of the harder classes of problems for any passive equivalence check.

Copy linkConstraints

Today, cache entries are project-scoped (by Project ID), stored server-side on Browserbase, and valid for 48 hours. These constraints keep the system simple and safe while we learn where reuse is highest.

Copy linkMeasuring performance (and what “~80%” means)

The cleanest way we’ve found to talk about performance publicly is to run the same action twice, sequentially. The first run inserts into the cache. The second run reads from cache and executes without an LLM call. We report the improvement as % speedup from run 1 to run 2.

This number varies heavily by workload. Repetitive, template-heavy flows can see extremely high hit rates and large speedups. Workloads that constantly visit new sites or run never-before-seen actions will not benefit as much.

Copy linkWhat's next

In plain terms: over time, we want to make cache entries reusable across a wider set of equivalent requests, without compromising safety.

Get started with npx create-browser-app or read more in our documentation.